Social Context Learning

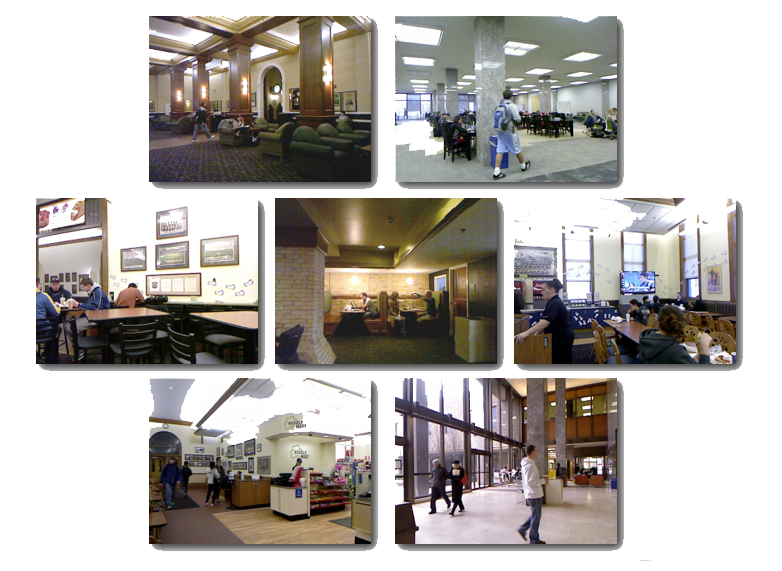

Context plays an important role in influencing human behavior in social spaces. Now, with robots entering the same social spaces as people, it becomes necessary that they interact naturally and fluently. To enable robots to operate independently, they should be adaptable and able to conform to their environment.

Our work focuses on learning such cues from high-level context. We are approaching this problem by applying machine learning to multimodal video of social scenes to classify events and predict actions of people within them. In addition to helping increase the social intelligence of robots, this work also informs the field of psycholinguistics.

Selected Publications:

Nigam, A. and Riek, L.D. (2015). "Social Context Perception for Mobile Robots". In 2015 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS).

O'Connor, M.F. and Riek, L.D. (2015). "Detecting Social Context: A Method for Social Event Classification Using Naturalistic Multimodal Data". In Proceedings of the 11th IEEE International Conference and Workshops on Automatic Face and Gesture Recognition (FG).

Hayes, C.J., O'Connor, M.F., and Riek, L.D. (2014) "Avoiding Robot Faux Pas: Using Social Context to Teach Robots Behavioral Propriety". In Proc. of the 9th ACM/IEEE International Conference on Human-Robot Interaction (HRI). [pdf]

Riek, L.D., and Robinson, P. "Challenges and Opportunities in Building Socially Intelligent Machines". (2011). IEEE Signal Processing. Vol. 28, Issue 3, p. 146-149. [pdf]